Google Research and UW's FIT: What It Means for Virtual Try-On

FIT from Google Research and the University of Washington is the first large-scale fit-aware virtual try-on dataset with ground-truth body and garment measurements. We look at what is new, what is still missing, and what it could mean for virtual try-on experiences going forward.

TL;DR

What's new?

Google Research and UW introduced FIT, a 1.13 million-sample virtual try-on dataset with ground-truth body and garment measurements, plus a companion model called Fit-VTO.

Why does it matter?

Today's virtual try-on is good at helping shoppers visualize style, but falls short on sizing. FIT is the first serious attempt at try-on that reflects real fit, closing in on the original promise of reducing returns.

What's actually out there now?

The paper and project page are live; the dataset and code are marked "coming soon." Fit-VTO is a research prototype, not something brands can deploy today.

What to expect for the future?

Our guess? Probably a hybrid model. Today's virtual try-on would remain the default because it is low-friction for both shoppers and retailers. Fit-aware virtual try-on would become an opt-in upgrade when shoppers and retailers can share measurements.

The year VTO became mainstream

Virtual try-on has been technologically possible for years, but 2026 is when it began to move from an experimental add-on to a standard part of online apparel shopping. A clear sign was Zara's rollout of virtual try-on. After a quiet start in select markets through late 2025 and a Spain-first launch in January, Zara expanded the feature globally in March, turning it on in-app for one of the largest shopper bases in fashion. At FASHN AI, we've seen interest from name brands shift from exploratory conversations to near-term deployment planning.

Part of why VTO spread this fast is that the prerequisites for integration are minimal. You need a product image (already on the product page) and a user image (provided by the shopper). No retailer schema changes, no catalog re-tagging, no data-sharing agreements. The AI does the rest.

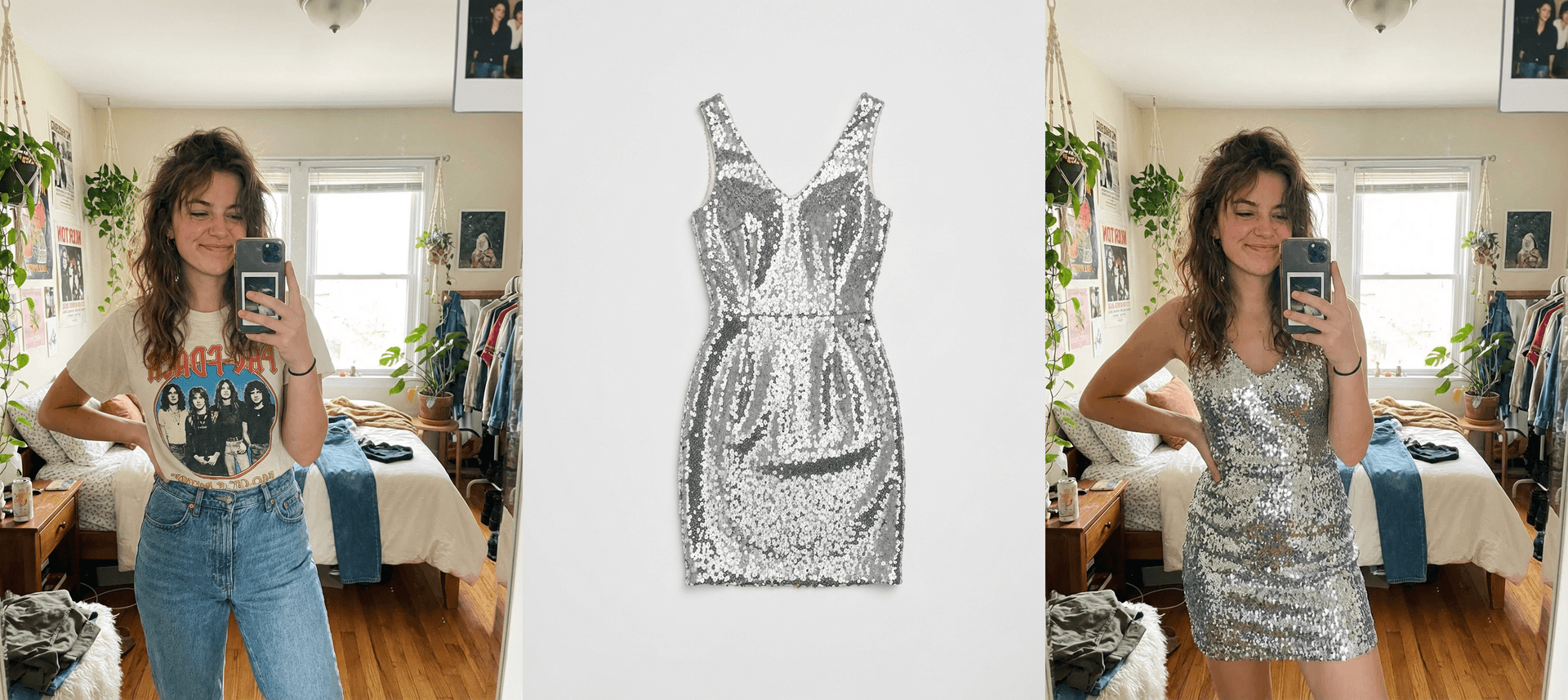

A virtual try-on result using the FASHN API: a product image and a user photo are the only inputs needed.

And the experience is genuinely good for what it does. Today's generative image-editing models can take your photo and a product image and produce a clean, plausible visualization of you wearing the item. That makes current VTO great for styling, inspiration, and mixing and matching. It is the digital equivalent of holding something up against yourself in the mirror.

But VTO hasn't solved the problem it was invented for

The original pitch for virtual try-on was not "help shoppers imagine an outfit." It was "reduce returns." The numbers help explain why. Apparel remains one of the highest-return categories in retail, and 39% of consumers who return apparel say fit is the reason. The garment arrives, it doesn't sit the way the shopper expected, back it goes. Virtual try-on was supposed to help prevent that kind of fit-driven return.

Current VTO still falls short on that goal. The models powering today's try-on experiences are generative image editors trained to produce plausible, aesthetic outputs. Given a person and a garment, they generate a clean, well-fitted visualization regardless of whether the garment would actually fit that person. A size M shirt and a size XL shirt on the same shopper look broadly similar in the simulation, even though they would feel noticeably different in real life.

A try-on result from the FASHN API. The output looks natural and well-fitted, but that does not guarantee the garment would actually fit this way in real life.

Why nobody built a fit-aware VTO dataset until now

If the fit gap is so well-known, why hadn't anyone built a measurement-rich dataset years ago? Three main challenges.

Scale. Modern generative models generally need training data in the millions of samples. That alone is a big undertaking.

Triplet curation. To learn real try-on, a model needs three images: the product image of the target garment, an image of the person wearing that garment, and an image of the same person wearing something else in the same pose. There is no natural e-commerce workflow that creates that data at scale, so researchers have to synthesize it or approximate it from simpler pairs. Making those synthetic triplets realistic enough to transfer back to real photos is a hard problem. We ran into the same issue at FASHN and addressed it with a synthetic triplet pipeline in FASHN v1.5.

Measurement annotation. This is the hardest part. A fit-aware model needs ground-truth measurements for every body and every garment in every sample. Collecting that from the real world means deep cooperation with shoppers willing to share exact body measurements and retailers willing to share precise per-garment specs beyond marketing S/M/L labels. Neither side has a strong natural incentive to contribute. This is the core reason fit-aware datasets did not exist at this scale until now.

What Google + UW just built

FIT is a two-part release from researchers at the University of Washington and Google Research. The dataset contains 1.13 million virtual try-on triplets, each annotated with ground-truth measurements for both the body and the garment. It is the first dataset of its kind at this scale. The companion model is Fit-VTO, a diffusion-based try-on model that uses those measurements at generation time.

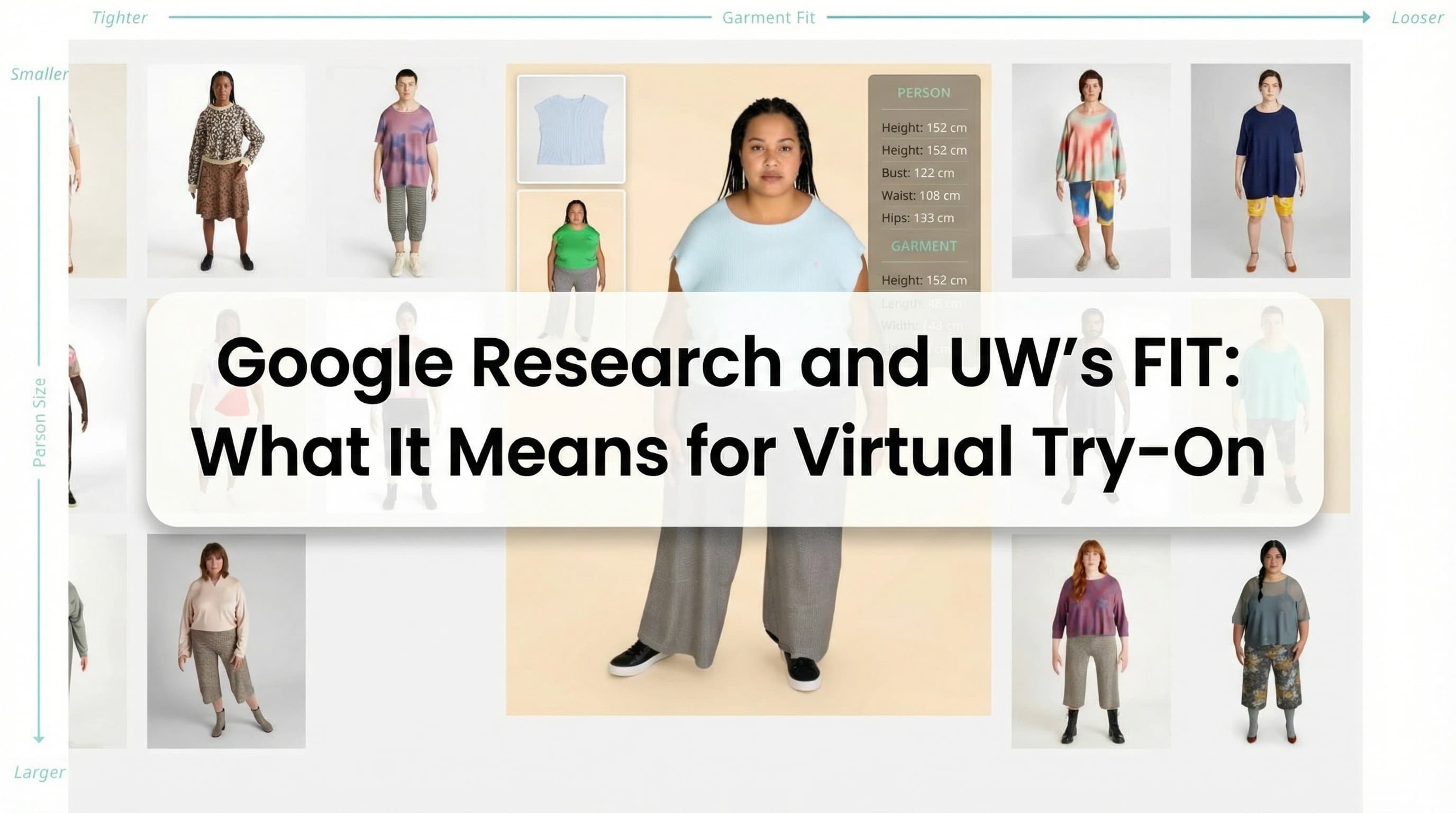

FIT dataset overview: layflat garment images (top), person images (middle), and try-on results (bottom) across a range of body and garment sizes, from tight fit to loose fit. Each sample includes precise body and garment measurements. Source: FIT project page.

They avoid the measurement-collection problem by generating the dataset synthetically. Parametric 3D garments are built and dressed onto parametric 3D bodies, then a physics engine simulates how each garment drapes. Because everything upstream is parametric, the measurements are ground-truth by construction. That removes the need for cooperation or data-sharing agreements. A re-texturing step converts the synthetic renders into photorealistic images while preserving the simulated geometry, and a paired-person step produces the same identity wearing two different garments so the full triplet structure is intact.

At a glance:

- 1,137,282 training triplets + 1,000 test samples

- 168 body shapes (XS–3XL) in 528 poses

- 158,483 unique upper-body garments

- Seven body measurements (bust, waist, hips, height, shoulder, length, sleeve) and three garment measurements (width, length, sleeve), all derived from the physics simulation

Synthetic data alone wasn't sufficient to train the model, though. The authors report that pure-synthetic training didn't transfer well, and they had to mix in roughly 330,000 real online fashion images (without measurement labels) to get the model to generalize. The final recipe is synthetic for ground-truth measurements, real imagery for photographic realism.

Fit-VTO results: same person, same garment, four different sizes (XS, M, XL, 3XL from left to right). The model generates different drape and fit for each size rather than defaulting to a single well-fitted result. Source: FIT project page.

The honest caveats

FIT is a major step, but it is still a research release, not a production-ready system.

Tightness granularity is unsolved. The physics engine distinguishes loose from fitted, but not tight from very tight. Two garments that are both smaller than the person's body size tend to render with similar tightness, even if one should look noticeably tighter than the other.

Tops only, front-facing poses only. The entire release is constrained to upper-body garments in casual front-facing poses. Pants, dresses, multi-layer apparel, and complex poses are listed as future work.

No human preference study. The paper evaluates with image-similarity metrics but doesn't publish a study of whether real shoppers actually judge fit-aware outputs as better. For a system intended to improve trust in fit, that is an important missing evaluation.

What this means for virtual try-on going forward

FIT does not change what brands can deploy tomorrow. What it changes is the target. Until now, most virtual try-on systems have been judged mainly on whether the result looks plausible. FIT makes room for a more demanding question: does the garment appear to fit this specific body in a believable way?

That does not mean today's low-friction version of VTO goes away. It works because it asks for very little: a product image and a shopper photo. A fit-aware system would ask for more from both sides. Shoppers would need to share body measurements, and retailers would need to provide per-garment specifications that go beyond standard size labels.

From our perspective at FASHN AI, the most likely outcome is not that fit-aware VTO replaces standard VTO, but that the two coexist. Standard VTO would remain the default for styling, inspiration, and quick purchase decisions. Fit-aware VTO would become an additional mode for cases where both the shopper and the retailer are willing to provide the data. If that model matures, the harder question will be when the added accuracy justifies the extra friction.

Bonus for the technically curious

A few findings from the paper that don't show up on the project page but are worth surfacing if you care about how this is built.

Using measurements as a text prompt reduces fit accuracy substantially. The obvious productization shortcut would be to feed measurements as natural-language tokens ("chest 38, waist 32, length 27") through a standard text encoder and let the existing cross-attention do the rest. The authors tested it. Fit accuracy dropped sharply in the ablation. Pre-trained text encoders aren't designed to represent structured numerical inputs.

Normal maps preserve simulated geometry in the photorealistic output. The re-texturing step (synthetic render → photorealistic image) is the most fragile part of a synthetic-first pipeline. It's where simulated geometry usually gets washed out by the photorealism model. The authors use a FLUX.1 dev model conditioned on surface normal maps of the simulated scene. Because normals encode geometry directly, the photorealistic output preserves the simulated drape and fit, rather than "improving" it into a generic good-looking result.

Heads and feet are inpainted separately. Synthetic bodies often have characteristic artifacts: bald avatars, weird toes. The authors route heads and feet through a second inpainting pass with a Gemini 2.5 image model, recompute surface normals on those regions, and stitch them back into the main image before the re-texturing step runs.

Closing

Virtual try-on went mainstream in 2026. FIT is the first large-scale, measurement-aware dataset aimed at closing the fit gap. Its existence should push future work toward benchmarks that measure fit directly. The paper and project page are live today; the dataset and code are expected to follow, and the work will be presented at SIGGRAPH 2026.

For brands looking to add virtual try-on to their store today, FASHN AI is a good place to start.